Platform engineering has a growing problem: AI agents that can explain your infrastructure but can’t operate on it. The bottleneck isn’t the model. It’s that most internal platforms were designed around humans clicking buttons, not around tools calling APIs. This post walks through how we approached that with the Kubermatic Developer Platform (KDP), and what we learned when we put MCP and Skills on top of it.

Infrastructure at Most Companies Today

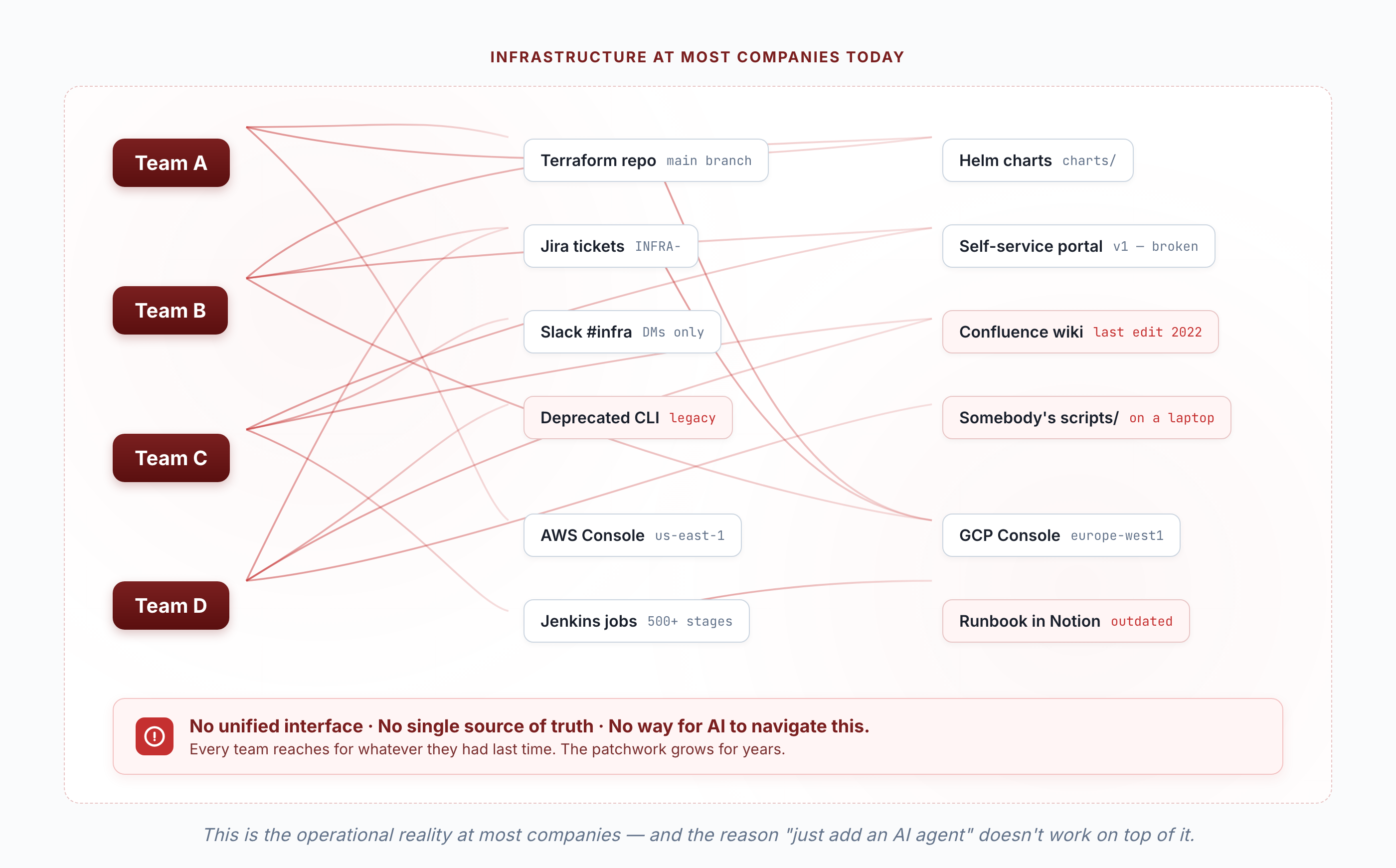

If you’ve worked at any company with more than a few teams, you already know what infrastructure management looks like in practice. It’s a dozen or even hundreds of systems scattered throughout the company.

Team A has a Terraform module in a Git repo. Team B uses a self-service portal for namespaces but files Jira tickets for databases. Team C just asks in Slack and hopes someone on the platform team sees it. There’s a Redis instance in staging that nobody is sure who owns. The “documentation” is a Confluence page from 2022 that links to a CLI tool that was deprecated last year.

This happens when every team solves their immediate needs with whatever they have. Over a couple years you end up with a patchwork of provisioning methods, access patterns, and knowledge that only lives in people’s heads.

And this is exactly why AI can’t help with infrastructure today at most companies. You can’t point an AI agent at this mess and expect it to figure out which Terraform module to run, which Slack channel to ask in, or which wiki page is still accurate. There’s no unified interface.

This is where KDP fits in. A unified platform creates the API surface that makes AI-assisted infrastructure management possible. One catalog to discover services. One API to provision them. One interface to check status and get credentials. When everything goes through the same surface, an AI agent with access to it can start being useful.

MCP and Skills — Giving AI Hands

Most AI assistants are stuck in a loop where they can tell you about how to run your infrastructure but can’t actually touch it. You ask “what databases do we have running?” and get a generic answer about how to use kubectl get. Not really helpful.

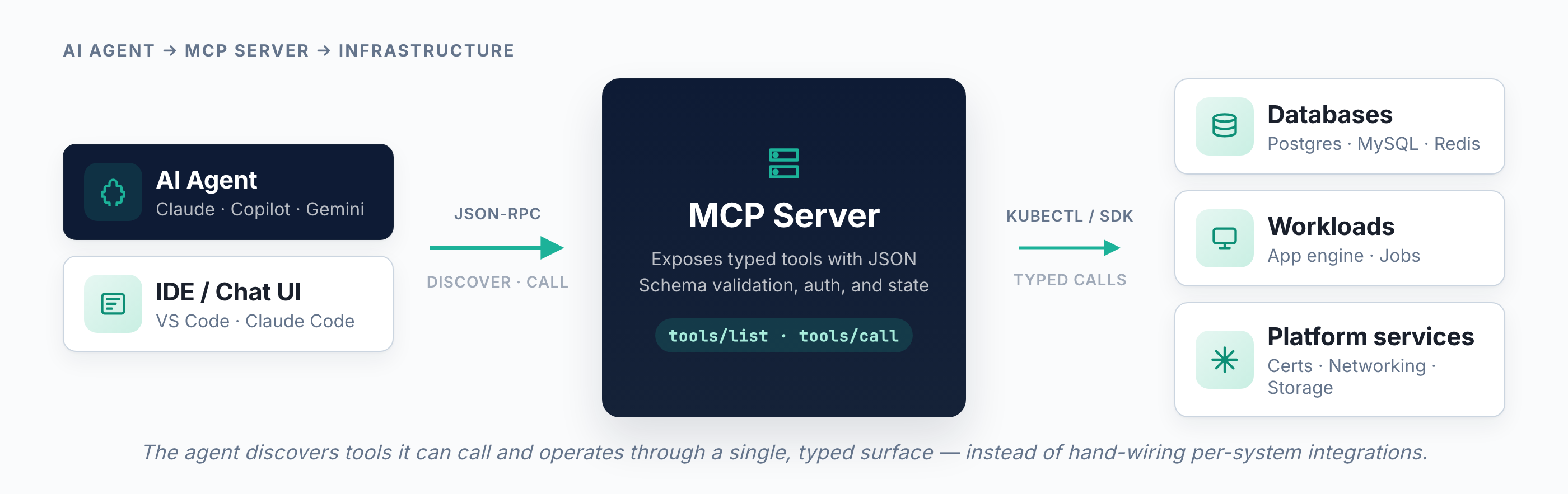

Model Context Protocol fixes this. It’s an open protocol, started at Anthropic, now under the Linux Foundation, that lets AI models call external tools. The model discovers what tools are available, figures out the parameters, and calls them when you ask for something. No custom integration per system. You run an MCP server that wraps your API, and any AI client that speaks MCP can use it.

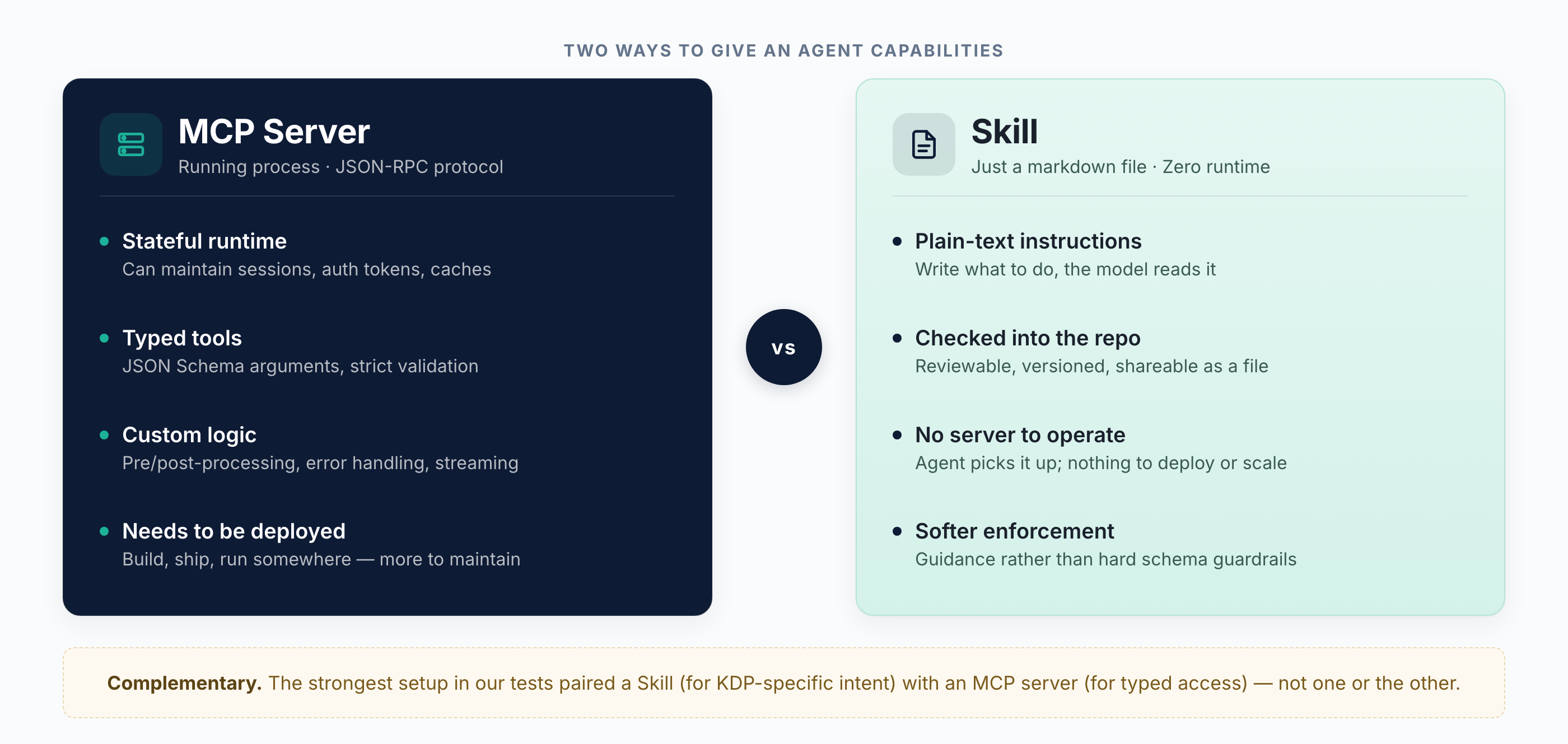

There’s a simpler option too: skills. In Claude Code, a skill is just a markdown file that tells the agent how to do a specific job. No server, nothing to deploy. You write something like “when the user asks to enable a service, run these kubectl commands in this order, and don’t mention APIBindings, just say you enabled it.” Drop the file in a folder and the agent picks it up.

Both have tradeoffs. MCP servers are more capable, they can maintain state, do complex logic, handle auth. Skills are simpler to write and share but limited to whatever commands you allow, and the combination can bring a better result sometimes. We built both for KDP, which we’ll get to.

MCP adoption has been fast, 97 million monthly SDK downloads as of early 2026, with support from Anthropic, OpenAI, Google, Microsoft, and yes, Kubermatic as a silver founding member of the Agentic AI Foundation ;)

kcp — Kubernetes Without the Containers

To understand KDP you need to understand kcp, because KDP is built on it.

kcp takes the Kubernetes API machinery, the part where you declare what you want in YAML, apply it, and a controller reconciles it, and strips out everything specific to workloads. No Pods, no Deployments, no nodes. What’s left is a generic multi-tenant API server that you can use to build platforms on.

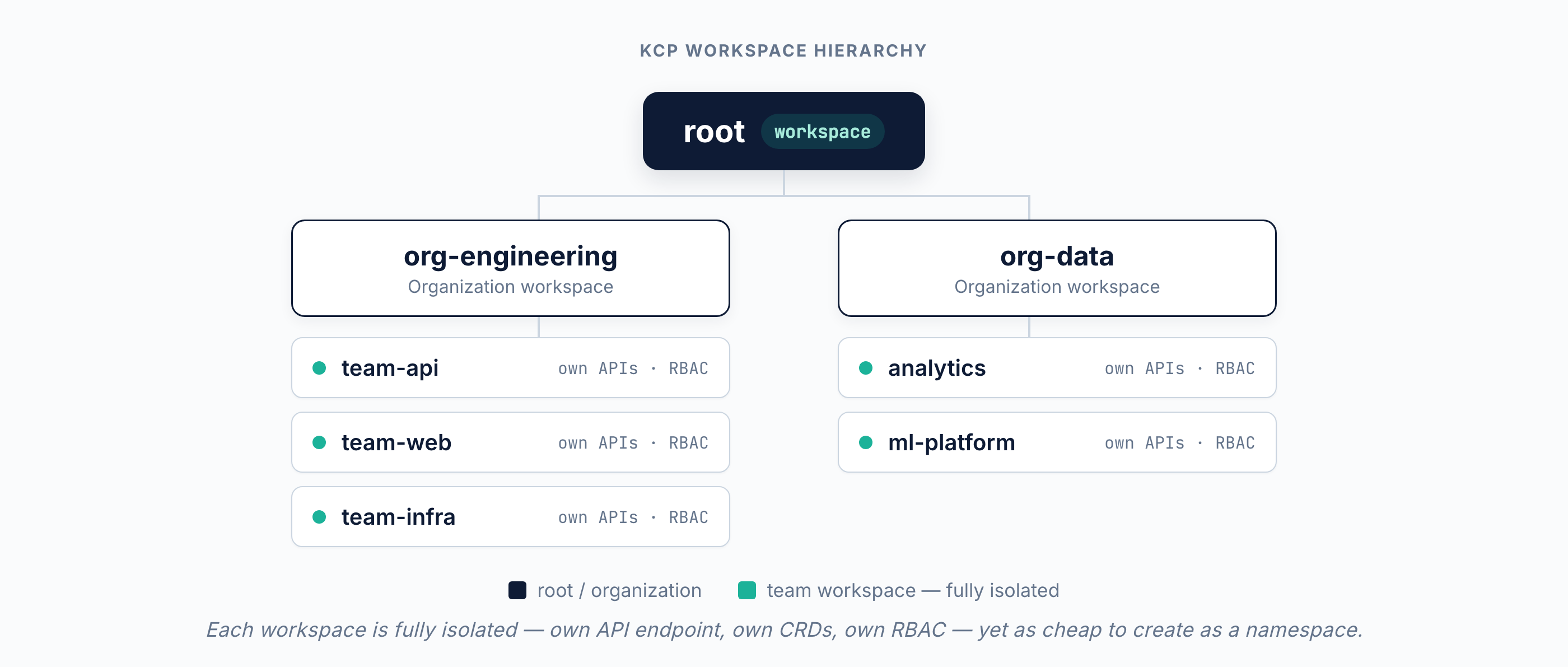

The central idea is workspaces. A workspace in kcp is a fully isolated environment, its own API endpoint, its own set of APIs, its own RBAC. It is like having your own Kubernetes cluster, except it’s as cheap to create as a namespace. The project’s ambition is a million workspaces across ten thousand shards, so the overhead per workspace is tiny.

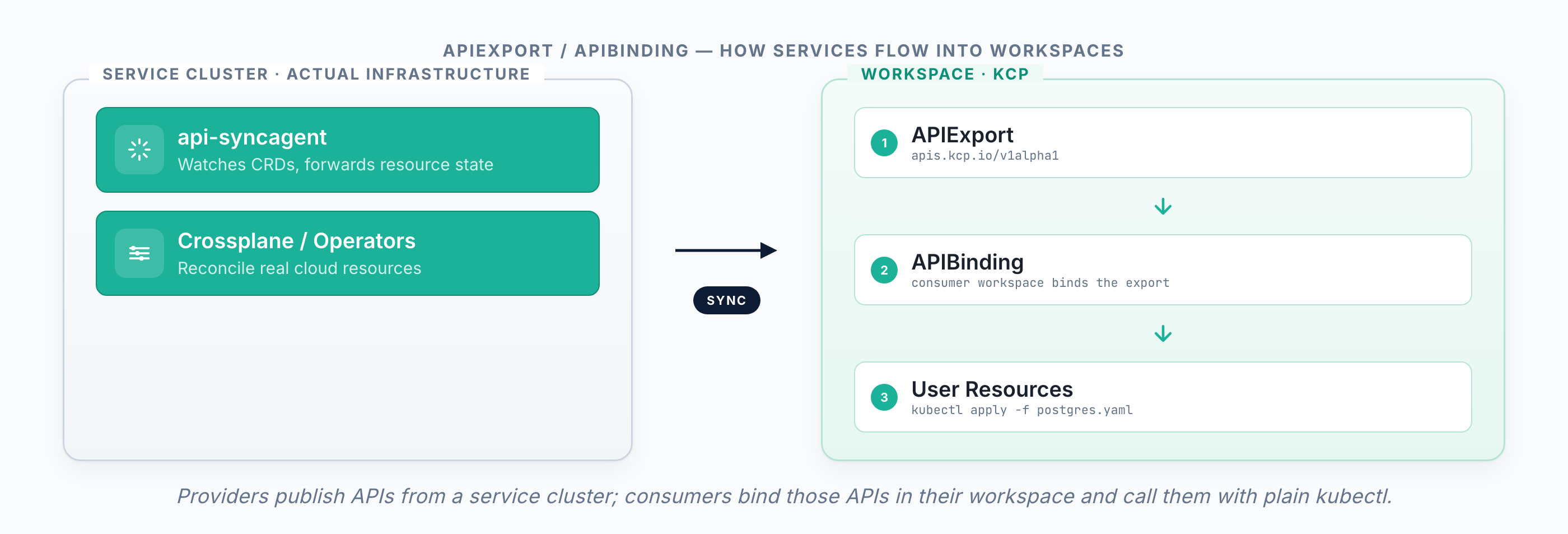

The part that matters for this blog post is what kcp calls “APIs as a Service.” Any workspace can advertise and provide a service — an API. The API provider (workspace) publishes a set of resource types through an APIExport, essentially announcing, “I offer these APIs to anyone who wants them.” A consumer in another workspace binds to that API by creating an APIBinding. Since all workspaces look like Kubernetes clusters, you can use kubectl to export, bind, and call the APIs! So simple.

The simplicity of publishing and consuming services (APIs) is key to addressing platform engineering bottlenecks. The platform team does not need to be a guard for every interaction between teams. Second, we can bring necessary capabilities to development teams at the greatly increased speed enabled by AI. This is what we do in KDP, building on top of kcp.

More at docs.kcp.io and github.com/kcp-dev/kcp.

KDP — The Actual Platform

KDP is what happens when you take kcp and build an Internal Developer Platform on top of it. It went GA in January 2026.

The bottom line: developers shouldn’t need to understand Kubernetes or kcp internals to get a database. They open a catalog, pick PostgreSQL, and have it running. All of kcp’s multi-tenancy and API machinery, hidden behind something simple.

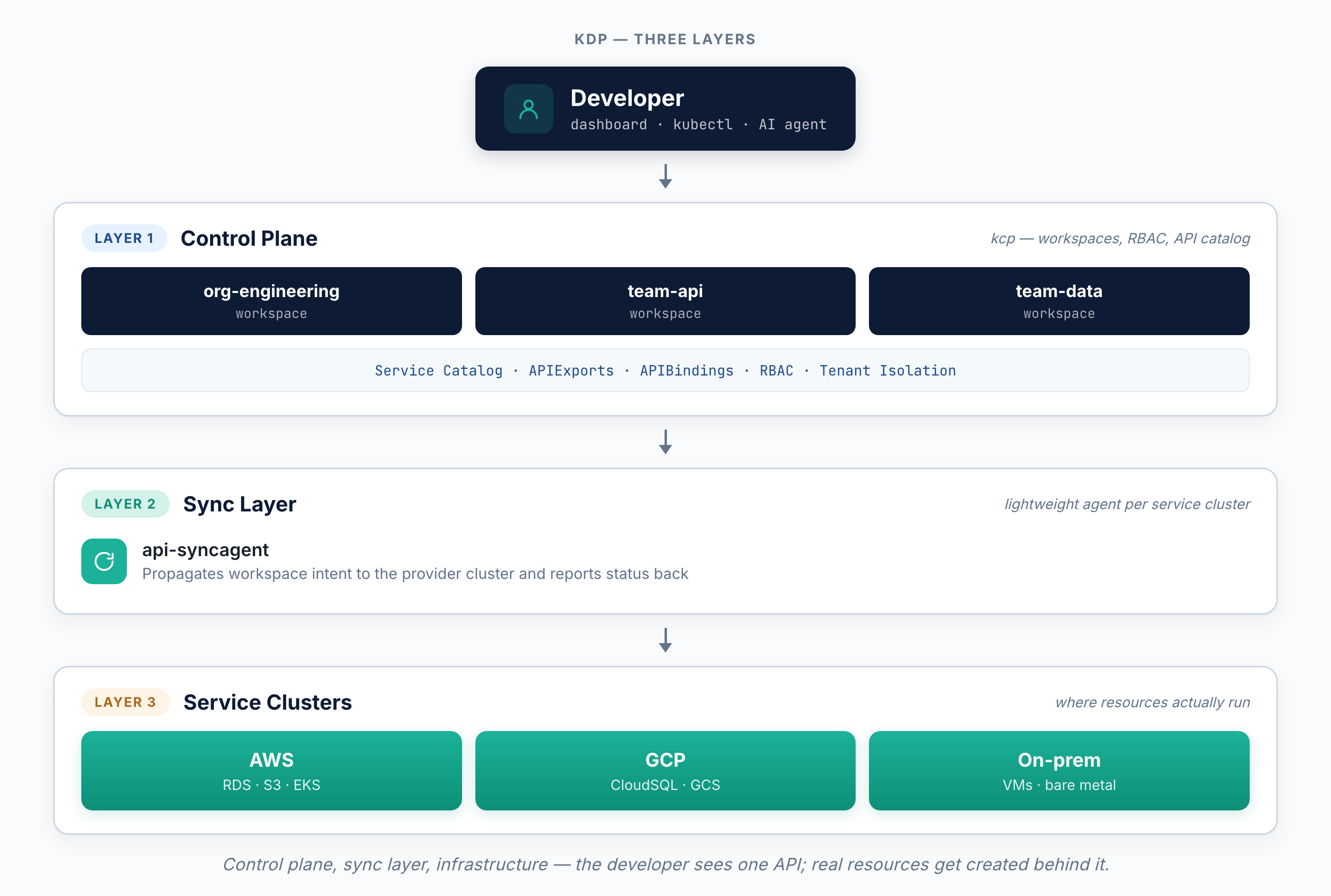

Under the hood, there are three layers.

The control plane is kcp. It manages workspaces, the service catalog, and isolation between tenants. This is where APIExports and APIBindings live, the mechanism that lets service providers publish and developers consume services.

The sync layer is a lightweight agent called api-syncagent that runs on each service cluster. When a developer creates a PostgreSQL resource through KDP, the sync agent picks it up and syncs it over on the provider cluster (infrastructure side), creating an actual database on AWS, GCP, or wherever the service points to.

The interface layer is a dashboard plus full kubectl. Anything you do in the UI, you can do through the API. This is important because it means automation and LLMs get the same capabilities as a human clicking through the dashboard.

Three personas use KDP. Platform owners manage the installation and workspace hierarchy. Service providers run infrastructure and publish to the catalog, they do this on their own, without waiting for the platform team. Developers browse the catalog, enable what they need, and create resources.

Full docs are at docs.kubermatic.com/developer-platform.

Enabling Agentic Workflows via KDP

To cover agentic use cases, KDP provides: skills (a wrapper around kubectl and the kcp plugin), MCP, and skills for simplifying working with our MCP. We tested them on the same real-life scenario: finding and provisioning services for an app to run.

The scenario:

- We want a list of all available services and which databases we can use

- Create a customer workspace with a production project inside with three active services (certificate management, databases and app engine) and create one of each and mount the database credentials into the app-engine

- Delete the customer workspace

When there was only the skill involved it worked great and without any issues but when the MCP was involved it forgot to accept the right permission claims so that the services are effectively unusable. We could have told the AI of course but the goal was to see what happens without much knowledge about the underlying system. There are for sure improvements we can make in our MCP implementation that would mitigate that problem, but I think the root cause here is that the AI did not combine the skill and MCP server but rather just used one.

In fact we’ve tested it several times, the skill was always good. The skill including the MCP was right sometimes and the MCP server alone was always some kind of failure.

KDP skill only (plus bare Kubernetes MCP)

KDP MCP only (plus bare Kubernetes MCP)

KDP skill + KDP MCP (plus bare Kubernetes MCP)

Safety and RBAC

In recent talks around AI in the Infrastructure space, one of the first questions from the platform engineers is what stops it from doing something stupid. Fair question.

The answer with KDP is that the AI agent goes through the exact same API and permission model as a human and its RBAC. There’s no separate path. If a developer can’t delete production databases from their workspace, neither can the AI agent operating in that workspace. kcp’s workspace isolation and RBAC apply regardless of whether the request comes from someone clicking a button in the dashboard or an AI agent calling kubectl.

This is one of the advantages of having a unified API surface. You don’t need a separate permission system for AI. You scope the agent to a workspace, give it a kubeconfig with the right RBAC, and the guardrails are already there. And even for multi-workspace operations it is still constrained by your RBAC.

On the MCP side, we get the KDP benefits and the extra of exposing only the tools it’s programmed to, with JSON Schema validation on every argument. Skills are softer here, there’s an allowed-tools field but it’s more of a guardrail than a hard enforcement.

The combination makes everything more powerful. KDP provides platform-level isolation, workspaces, RBAC, tenant boundaries. MCP provides the tool-level control. Together, you get an AI agent with security guardrails.

Conclusion

To match the velocity of AI development and the rise of autonomous agents, platform engineering must move toward a decentralized, API-first service model. KDP, powered by the kcp control plane, provides the machine-readable foundation required for both human developers and AI agents to interact with infrastructure programmatically.

Because KDP uses standard Kubernetes APIs, is extensible, and is purpose-built for API orchestration, it is agent-ready. This allows AI agents to independently discover capabilities, manage complex dependencies, and execute service compositions without human intervention or manual gatekeeping. By treating infrastructure as composable artifacts, KDP removes the platform team from the critical path, enabling a marketplace where the speed of delivery is limited only by the speed of the agent’s logic. This ensures that the platform remains a force multiplier for both traditional “Golden Path” applications and the next generation of agentic workflows.