We’re happy to announce the release of Kubermatic Kubernetes Platform (KKP) 2.30! Over the past months, we have focused our engineering efforts on expanding capabilities and improving usability across the core platform and the KKP Dashboard.

This release includes over 100 merged pull requests and focuses on three main areas: better support for AI workloads, modern traffic management with Gateway API, and stronger observability and operational control.

Here’s everything that’s new.

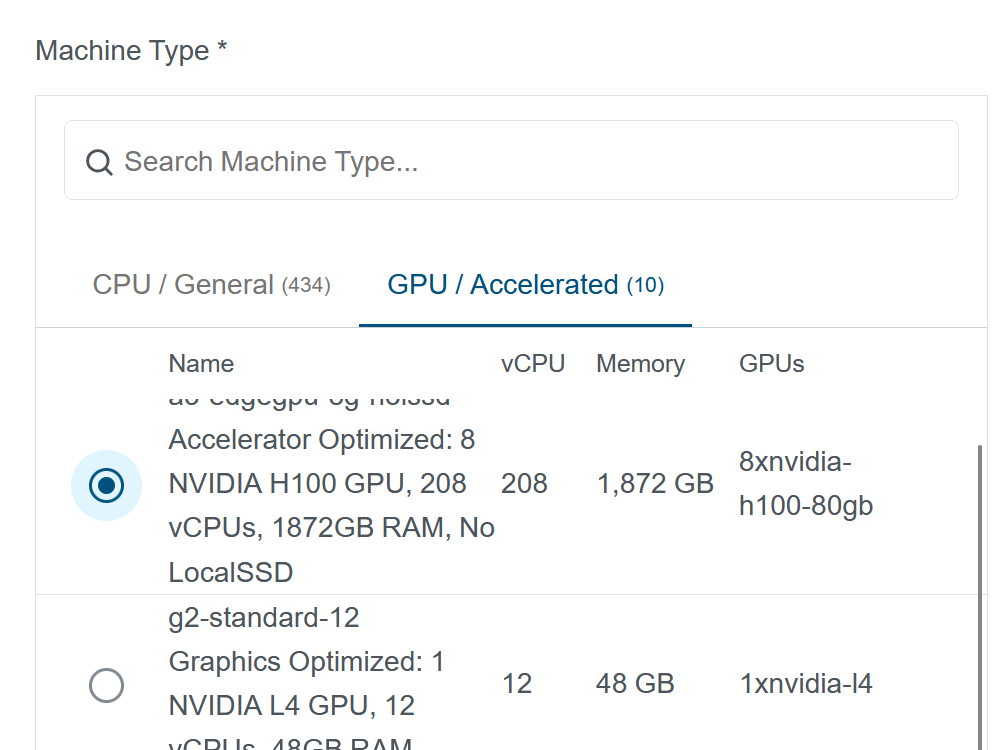

AI-Ready Infrastructure: Advanced GPU Machine Type Selector

As AI appliances and machine learning workloads evolve, optimizing hardware allocation is critical. KKP 2.30 introduces an improved Machine Type Selector built specifically for GPU clusters.

It makes it easier to find and compare GPU-optimized instance types across providers, allowing you to provision clusters that match your workload requirements, without overprovisioning or wasted resources.

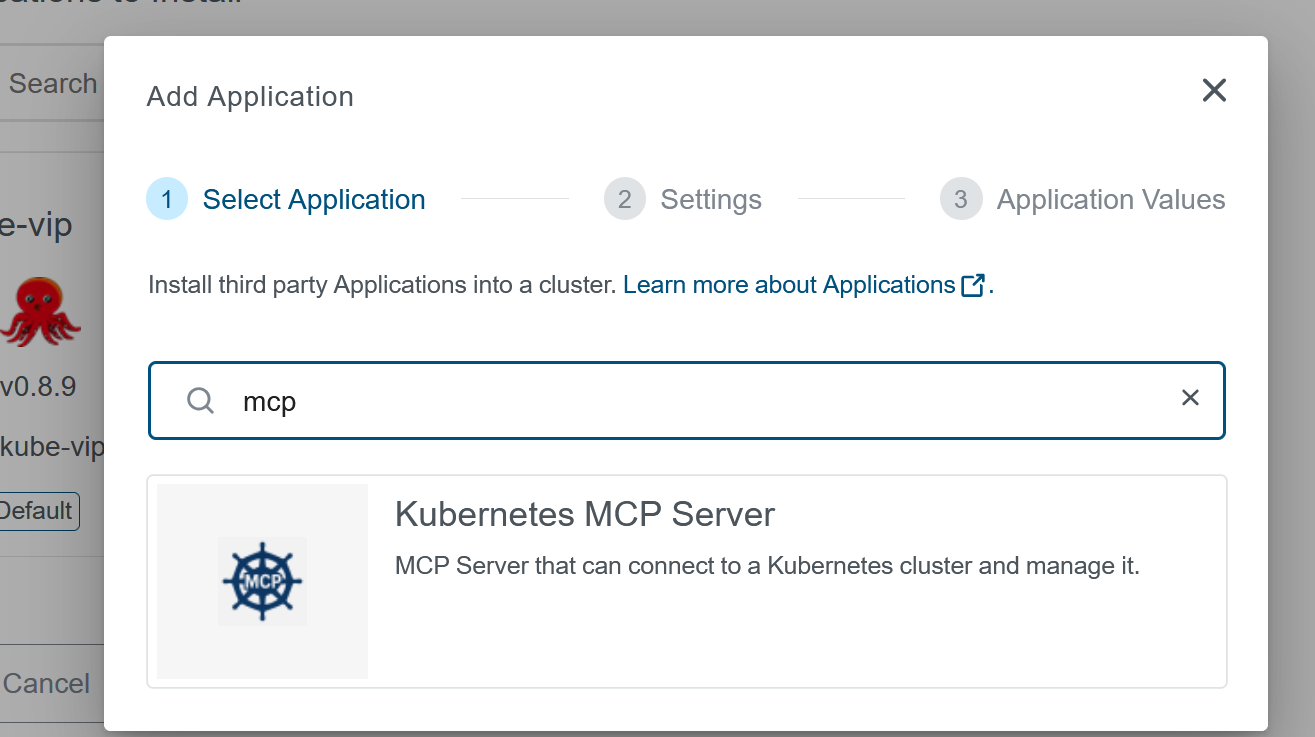

Kubernetes MCP Server Integration

In KKP 2.30, we have introduced the Kubernetes MCP (Model Context Protocol) Server as a deployable application.

By deploying the MCP server, you can directly interface with and manage your KKP clusters using LLM-powered assistants. This opens the door to conversational operations and AI-assisted troubleshooting.

You can read more about MCP server and how it can be useful to manage user-clusters in this documentation page.

Next-Generation Traffic Routing with Gateway API

With NGINX Ingress support retiring in March 2026, KKP 2.30 introduces Gateway API support as a modern alternative for external traffic routing.

- IAP Integration: We’ve added Gateway API support to the IAP (Identity-Aware Proxy) chart, allowing your deployments to natively use

HTTPRouteresources instead of standard Ingress objects. - Easy Migration & Opt-in: You can easily enable this via the

--enable-gateway-apioperator flag or by settingmigrateGatewayAPI: truein your Helm values.

Migration to Grafana Alloy

With Grafana Agent reaching deprecation, KKP 2.30 is officially upgrading our User Monitoring, Logging, and Alerting (MLA) stack to Grafana Alloy.

The migration from Promtail to Alloy for user-cluster MLA will be transparent to end-users as well as KKP administrators. In the future, we are also looking to exploit more features of Alloy.

Advanced KubeVirt & KubeLB Capabilities

Virtual Machines running natively on Kubernetes continue to be a massive focus for enterprise hybrid environments. In 2.30, we’ve enriched our KubeVirt provider capabilities:

- Custom Environment Variables for Machine Controller: You can now inject new environment variables, like cluster ID and project ID directly into the KubeVirt provider via the machine controller that will be introduced as labels on the KubeVirt VMs.

- KubeLB Enhancements: We’ve updated KubeLB to v1.3.1 and made the platform vastly more flexible. You can now override the image for the KubeLB Cloud Controller Manager (CCM) directly using

.spec.userCluster.kubelbwithin yourKubermaticConfiguration.

Tighter Security, Authentication, & Identity Management

Security and strict tenant isolation are never an afterthought. We’ve grouped a series of enhancements that give administrators much tighter control over authentication:

- OAuth2-Proxy Flexibility: Users can now pass additional configuration arguments directly to

oauth2-proxypods. This feature addresses some edge cases where Azure Entra was sending really long queryparams to oauth2-proxy and authentication was failing for seed mla and user-cluster mla endpoints. You can add extra args per IAP deployment or for all deployments globally. Check the linked PR for short example how to customize. - Orphan Binding Cleanup: We resolved a persistent edge case where deleting a User or Project left behind orphaned

UserProjectBindingresources in the background. KKP 2.30 ensures your RBAC and multi-tenant environments stay pristine and secure. - Partial Configurations of Cluster CR: Component settings fields in Cluster CRD now allow only relevant attributes to be specified, reducing the friction of partial configurations and policy template selectors across the API. The linked PR has an example to demonstrate how this change makes overriding easier for cluster specs.

Deep Platform Polish & Stability

A significant portion of this cycle went toward hardening the system, updating core dependencies, and upgrading the user experience:

- Cortex 1.16.1 Upgrades: We’ve pushed a crucial upgrade to Cortex that squashes an issue where the

cortex-ingesterwas consuming excessive storage space and throwing repeating log errors, significantly improving MLA stability. Note: Please also check the release notes for potential gotcha during upgrade. - Control Plane Resiliency: Administrators can now set specific Toleration overrides directly for control plane components, giving you tighter placement control over critical cluster infrastructure. Due to this change, you can ensure that control-plane components of some high-priority user-cluster(s) are secured on some dedicated MachineDeployments.

- Storage Fixes: For those running bleeding-edge clusters, we’ve completely resolved the

azurefile-csiedge cases when running Kubernetes versions 1.32 and newer. More information in this PR.

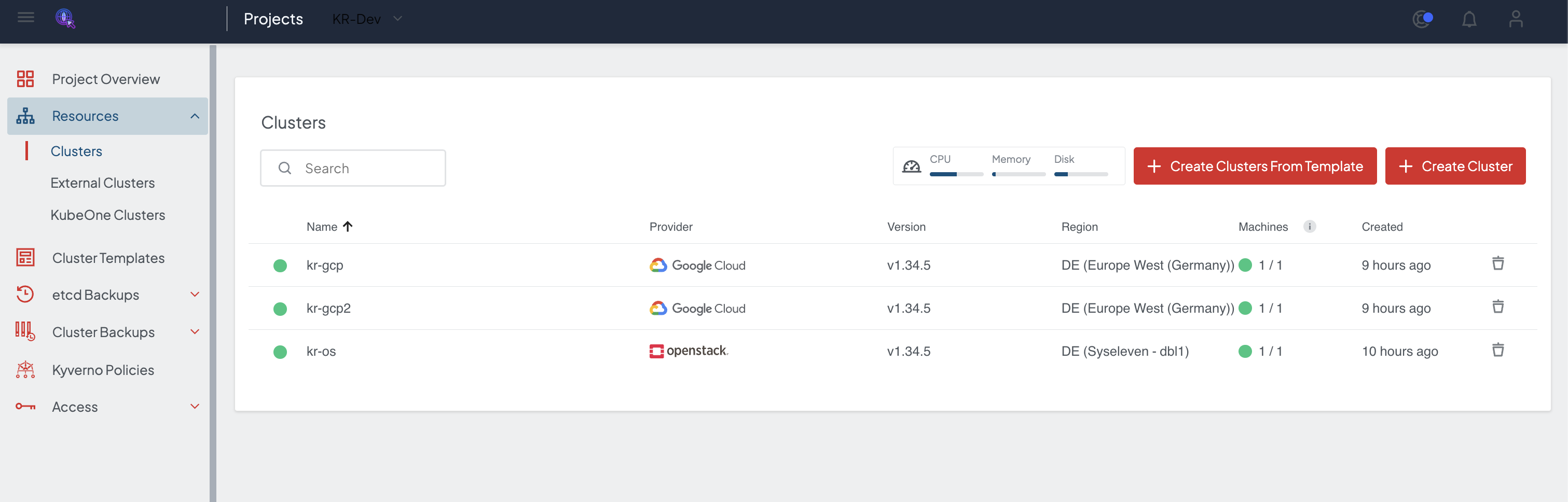

Making UI and User Experience Even More Seamless

You will notice various visual and workflow refinements resulting from a sweeping cleanup of dashboard components, making cluster creation, GPU allocation, and observability workflows snappier and more intuitive.

Project Search Improvements: Project search now supports backend filtering by project fields, cluster name, and cluster ID for enhanced discoverability.

VSphere Cluster Tagging Enhancements: Allows users to view predefined category tags for vSphere cluster tags and manually add additional tags as needed.

Event Rate Limit Enhancements: Dashboard now supports all limit types of the Kubernetes EventRateLimit admission plugin: Server, Namespace, User, and SourceAndObject, enabling flexible event rate limiting policies.

Admin Panel:

- Default: Either can be set default which will be applied when creating a new User Cluster

- Enforced: Global Enforcement of Event Rate Limit Policies and once restricted it can’t be modified or removed from cluster wizard.

White-Labeling Improvements: Add support for easier white-labeling and branding of KKP. This allows customization of several UI elements, including the logo, colors, fonts, favicon, background, and page title.

Improved Machine Type Selection UI in Cluster Creation: Machine types are now presented in a structured, searchable, and filterable table with columns for instance name, vCPU, memory, and description. Users can filter between standard CPU and GPU-enabled instances (where supported).

Make nftables as Default Proxy Mode: Kubernetes 1.35+ clusters with non-Cilium CNI plugin now default to nftables instead of ipvs, aligning with upstream Kubernetes direction.

Updated lifecycle and platform support

- Kubernetes 1.35 Support: Stay up-to-date with the latest from the community with added support for Kubernetes version 1.35.

Have a good time trying out the new features!

We couldn’t have done this without the continuous feedback from our incredible community. We hope you find these new features and improvements valuable for your projects!

Thank you for being a part of the Kubermatic community, and we look forward to your feedback on KKP 2.30. You can find more details about this release in the changelog. If you find our contributions valuable, we kindly encourage you to leave a star on our GitHub repository. As always, please don’t hesitate to reach out with any questions or suggestions via Contact Us form.