Introduction

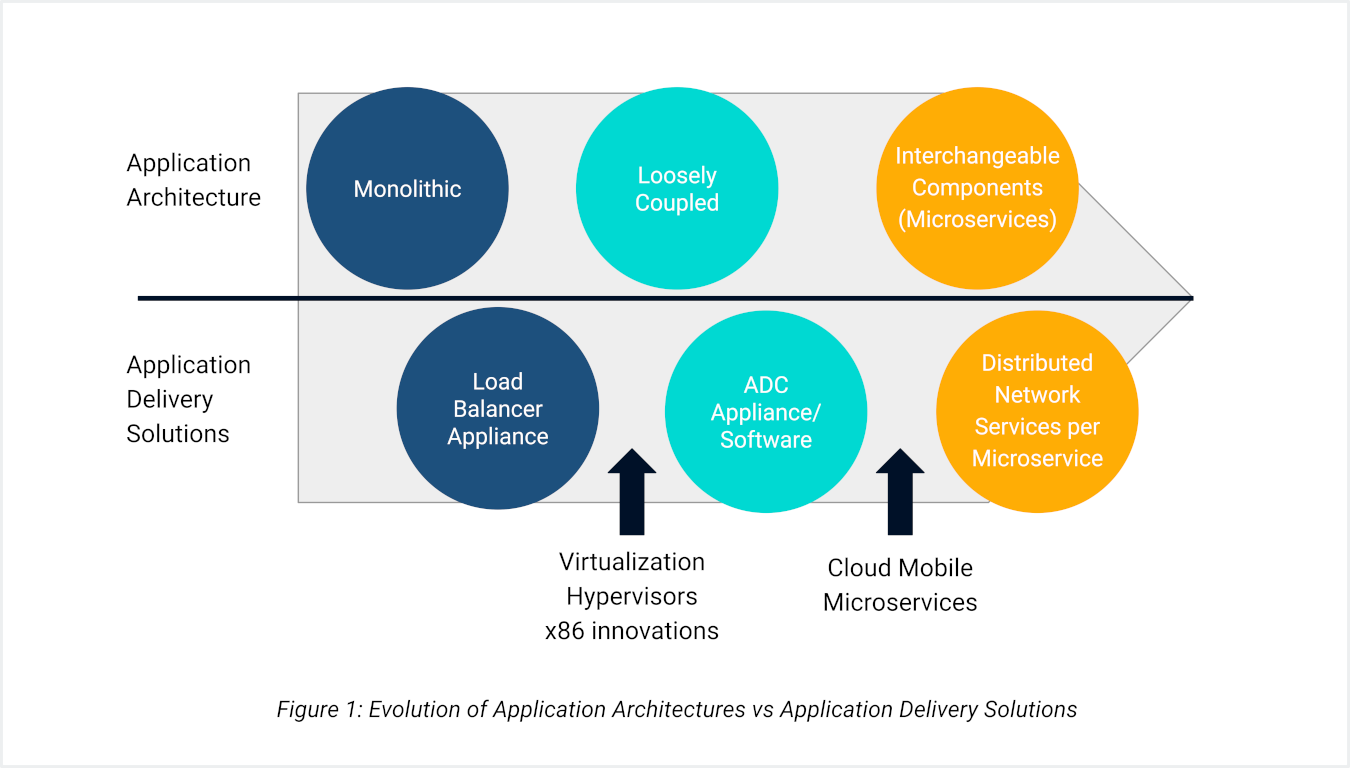

Throughout the years, application architecture has evolved from client-server to service-oriented to cloud-native microservices-based architectures. This evolution significantly impacts application development methods and approaches to scalability, security, and, most importantly, application delivery.

Unfortunately, the industry’s current state of Application Delivery Controllers (ADCs), also known as load balancers, falls short. The demand for services has evolved, and what once required just one service now necessitates multiple distinct services. In this new era of service explosion, it will be challenging for IT teams to effectively handle the lifecycle management of applications unless the modern-day ADC is “microservices aware” and has the appropriate automation — enabled via APIs, automation, and orchestration frameworks — to provision, configure, and manage every microservice.

This paper describes how application architectures have evolved and how KubeLB’s distributed microservices approach can dramatically reduce the operational impact of microservices-based application architecture.

The Monolithic Architecture

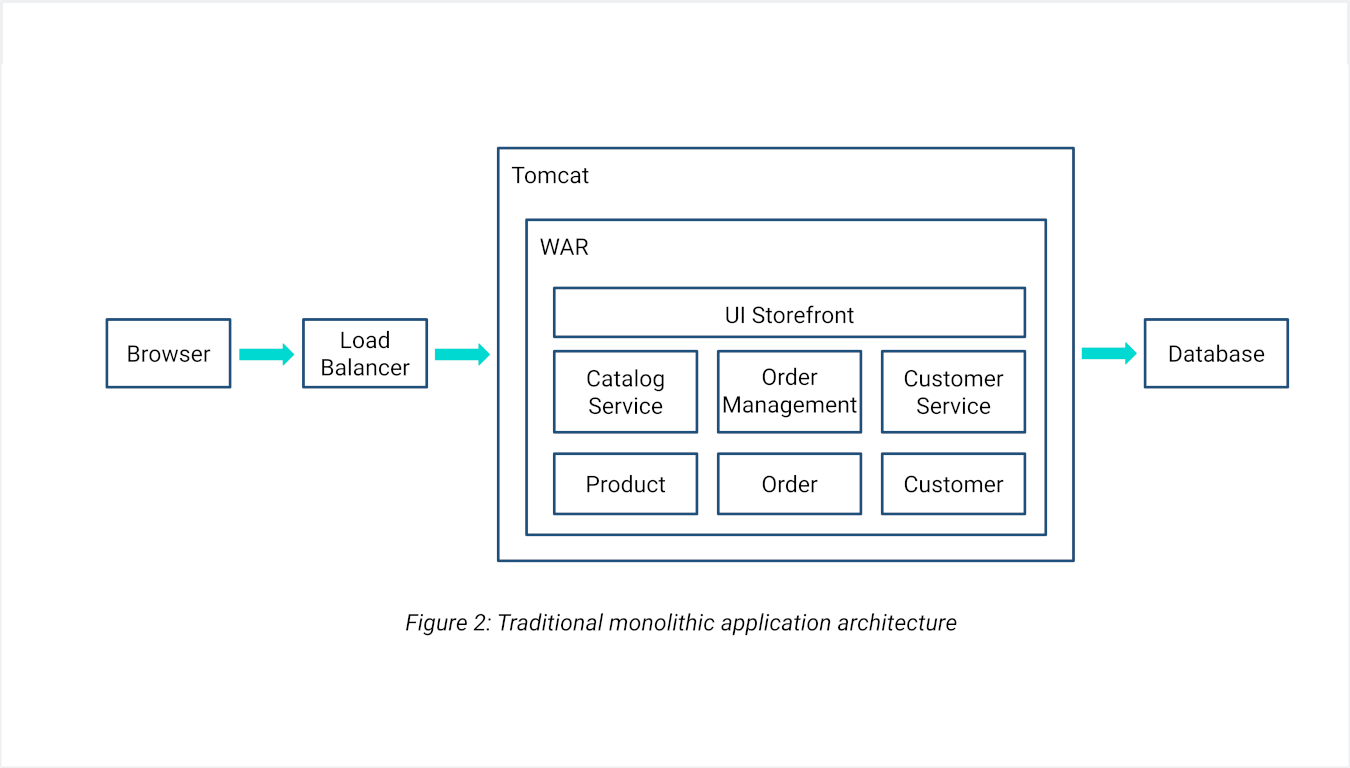

At the inception of web application development, the predominant enterprise application architecture involved bundling all the application server-side components into a single unit. Numerous enterprise applications, for instance, often consist of solitary WAR or EAR files.

Consider developing an online store that accepts orders, verifies inventory and available credit, and handles shipments. This application comprises various components, including the front-end UI responsible for the user interface, and services dedicated to managing the product catalog, processing orders, and handling accounts. The services utilize a shared domain model, including Product, Order, and Customer. Despite its logically modular design, the application is deployed as a monolith — a single WAR file running on a web container like Tomcat.

Simple to Develop

IDEs and development tools are oriented toward building a single application. Testing and deploying them is straightforward since everything coexists within a single application.

Works for Small Apps

The monolithic approach works well for relatively small applications with limited team sizes and modest scaling requirements.

Becomes Cumbersome

When dealing with complex applications, the monolithic architecture becomes cumbersome, posing serious challenges for scaling, experimentation, and technology evolution.

Attempting a new infrastructure framework often requires rewriting the entire application, which becomes risky and impractical. To deploy new changes to one application component, you have to build and deploy the entire monolith — complex, risky, and time-consuming. In cases where one service demands significant memory resources and another is CPU-intensive, provisioning the server necessitates allocating sufficient capacity to accommodate the baseline load for every service, making cost escalation inevitable.

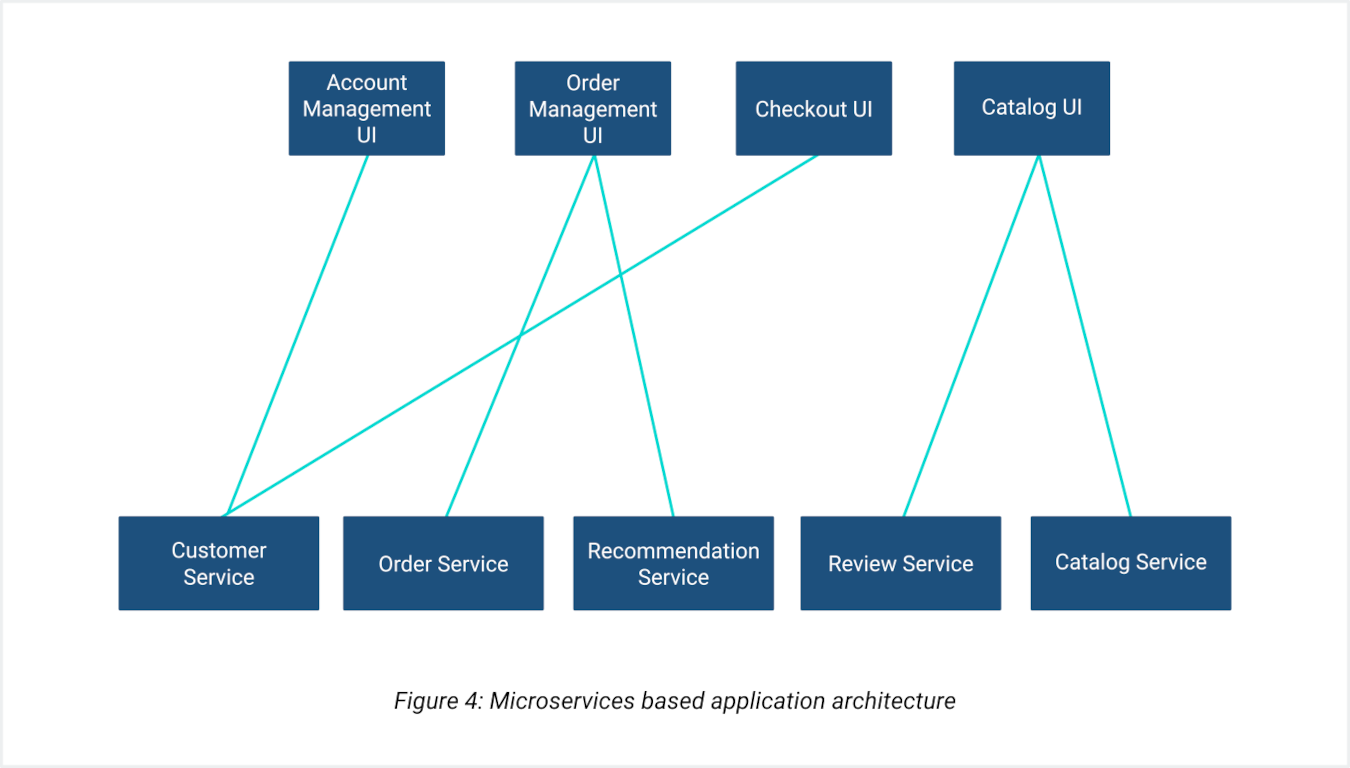

The Cloud-Native Microservices Architecture

The cloud-native microservices-based architecture is designed to address the issues seen with monolithic architecture. Services are disassembled into distinct services and deployed independently on separate hosts. Each microservice is dedicated to a specific business function, solely encompassing the operations essential to that particular business function. This architecture utilizes tools like Kubernetes, ArgoCD, Flux, and other cloud-native projects.

Enhanced Developer Productivity

Each service is relatively small, with a more understandable codebase for developers. This enhances developer productivity since teams are now only focused on a subset of the application; the IDE is more efficient, and running and testing the application is less time-consuming.

Independent Deployment

Since each service is isolable and not dependent on other services, developers can work on specific services in isolation without being dependent on different teams or silos within an organization. This makes continuous development, testing, and deployment easy and greatly effective.

Granular Scaling

The biggest advantage of this architecture is the ability to configure scaling, underlying hardware, and resource requirements (CPU, GPU, or memory intensive) per service. This can greatly enhance the throughput of applications while reducing the cost incurred.

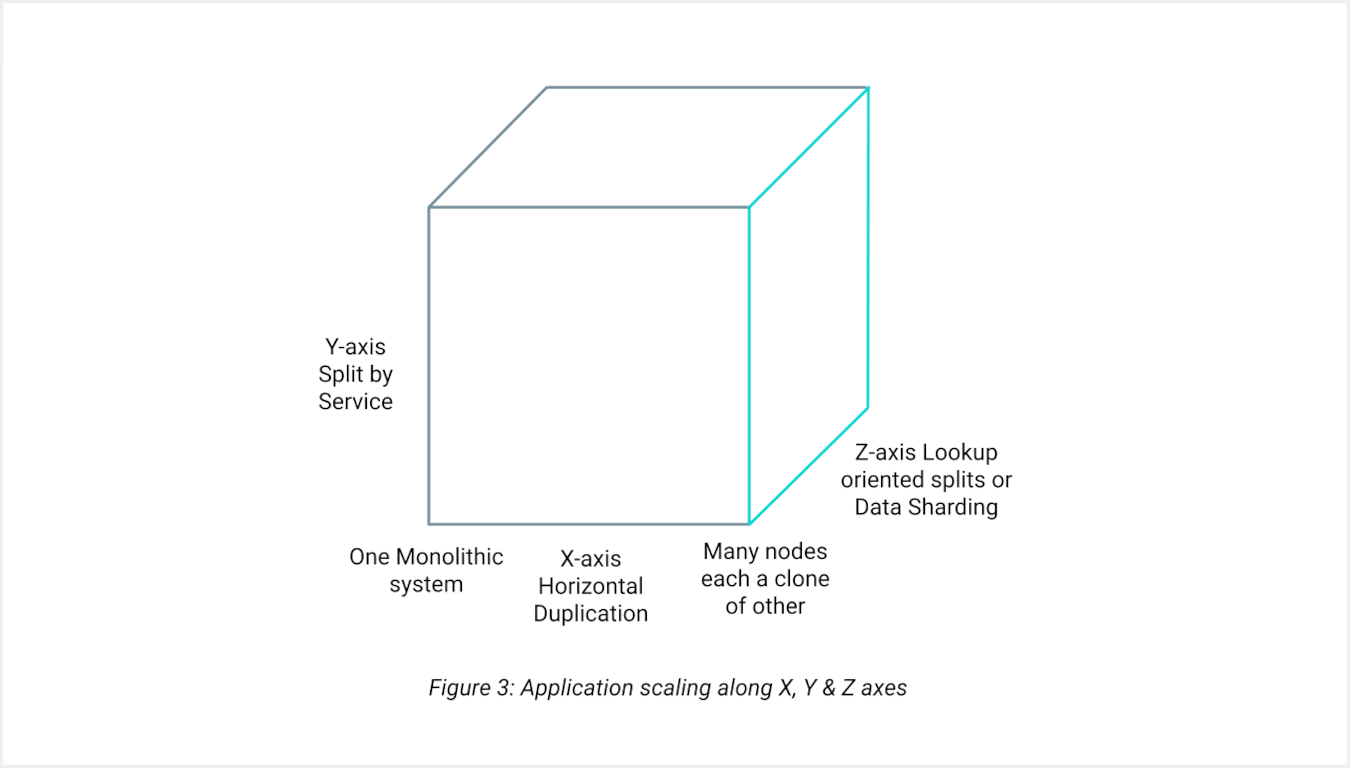

Scaling Microservices along X, Y & Z Axes

X-Axis Scaling

Horizontal scaling involves running multiple copies of the entire application behind a load balancer. Each instance of the application is referred to as a horizontal slice. This is the most common form of scaling for web applications.

Y-Axis Scaling

Vertical scaling involves splitting the application into different parts, each running on a separate machine — the most common form of scaling for microservices, enabling independent resource allocation per service.

Z-Axis Scaling

Also known as functional decomposition, Z-axis scaling involves splitting the application into different parts based on data partitioning, with each part running on a separate machine. The most common representation of this is the “Scale Cube,” a three-dimensional scalability model popularized by The Art of Scalability.

Rise of Containerization and Orchestration Tools

As microservices became more prevalent, tools like Docker, Docker Swarm, and Kubernetes emerged to simplify deployment and scaling. Docker revolutionized containerization, enabling consistent environments across development and production. Kubernetes advanced these capabilities, offering robust orchestration, management, and scalability for complex, distributed microservices architectures. Its powerful features — such as automated scaling, self-healing, and rolling updates — addressed many challenges associated with deploying and managing microservices at scale, making it the de facto platform for microservices in the industry.

Challenges with Kubernetes

While Kubernetes significantly simplifies the orchestration and management of containerized applications, it introduces challenges — particularly in multi-cluster and multi-tenant environments.

Introducing KubeLB

KubeLB is a software-based next-generation application delivery platform with integrated analytics, which provides secure, reliable, and scalable network services for cloud-native applications.

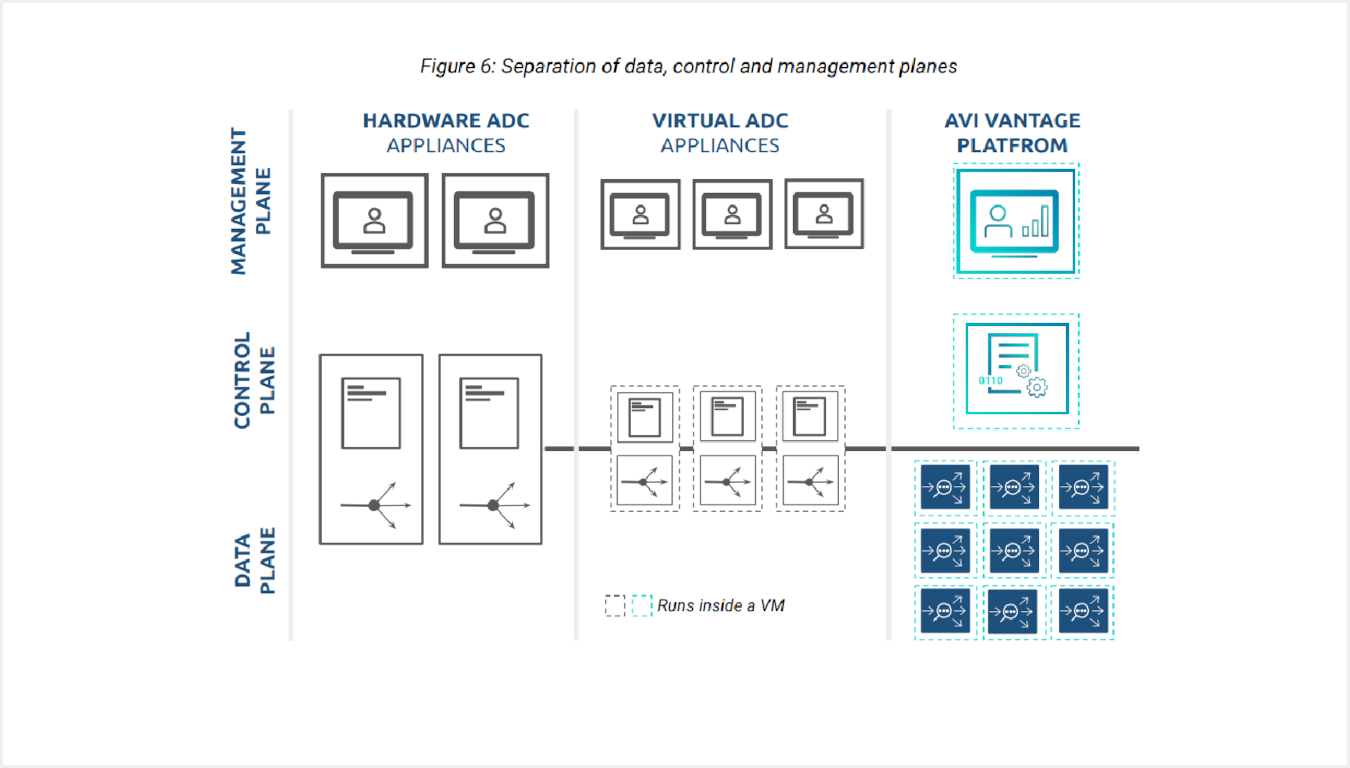

At the heart of KubeLB is a revolutionary architecture based on SDN principles, separating the data plane from the control plane — an industry first for Application Delivery Controllers and Load Balancers.

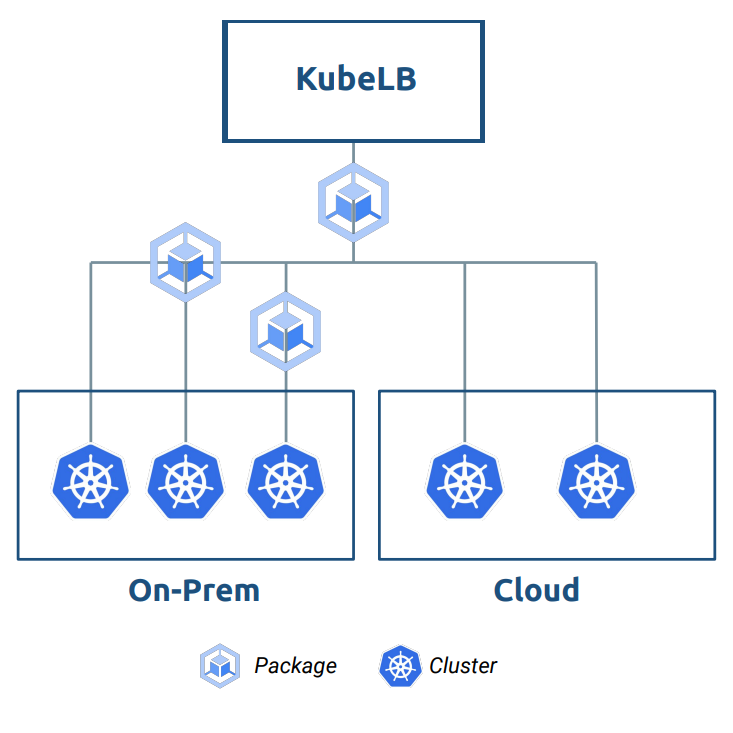

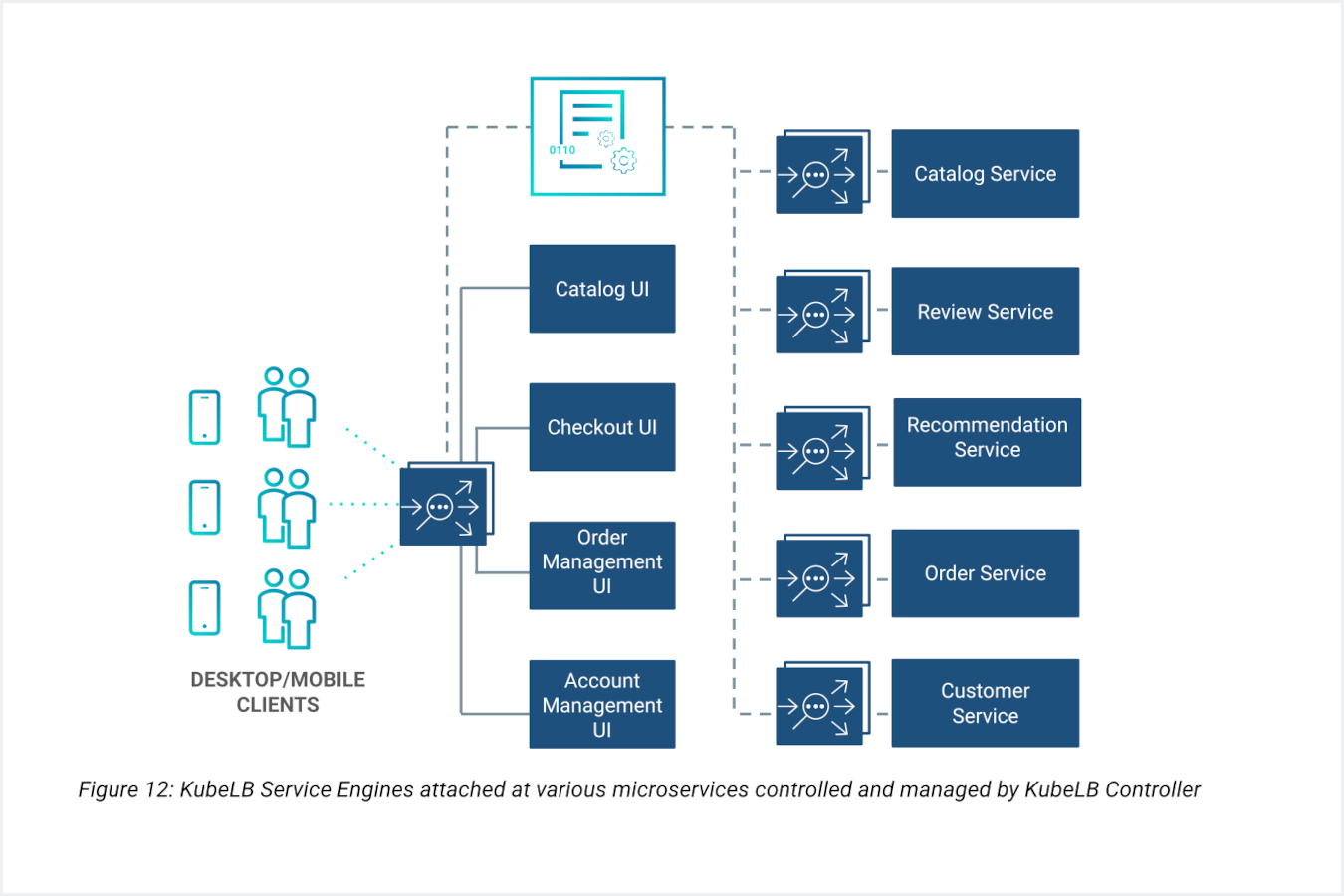

KubeLB enables seamless scaling of application delivery services within and across data centers and cloud locations while maintaining a single point of management and control. The load balancer operates as a service, so multiple tenants can leverage the same software. It detects the tenant environment and acts accordingly.

Distributed Architecture

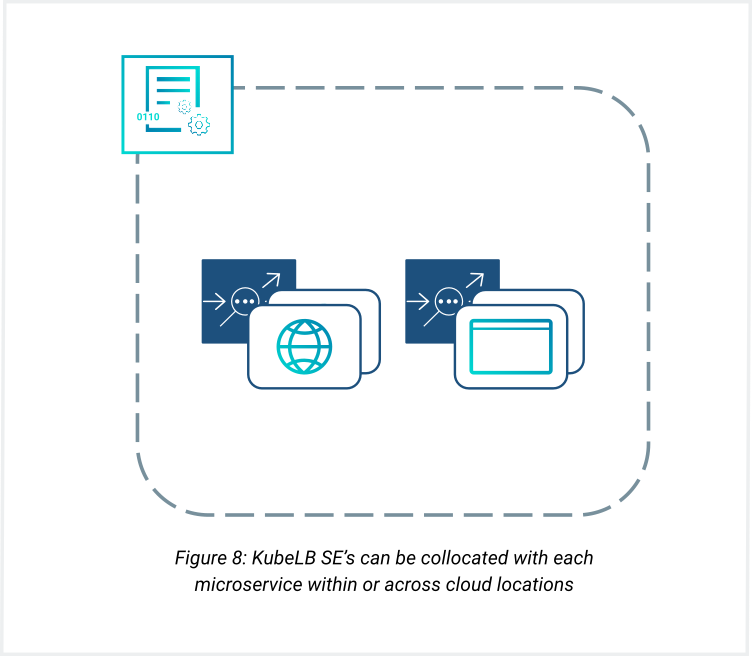

The distributed load balancers implemented by high-performance Cilium and Envoy provide comprehensive application delivery services such as load balancing, application acceleration, and application security. Cilium and Envoy can be co-located with applications within and across cloud locations and tuned for higher performance.

Placement Intelligence

Using KubeLB's rich data, control, and management plane services, Cilium and Envoy can be placed close to the application's microservices and tuned for higher performance and faster client responses.

End-to-End Analytics

The integrated data collectors gather end-to-end timing, metrics, and logs for each user-to-application transaction, providing actionable insights about end-user experience, application performance, infrastructure utilization, and anomalous behavior.

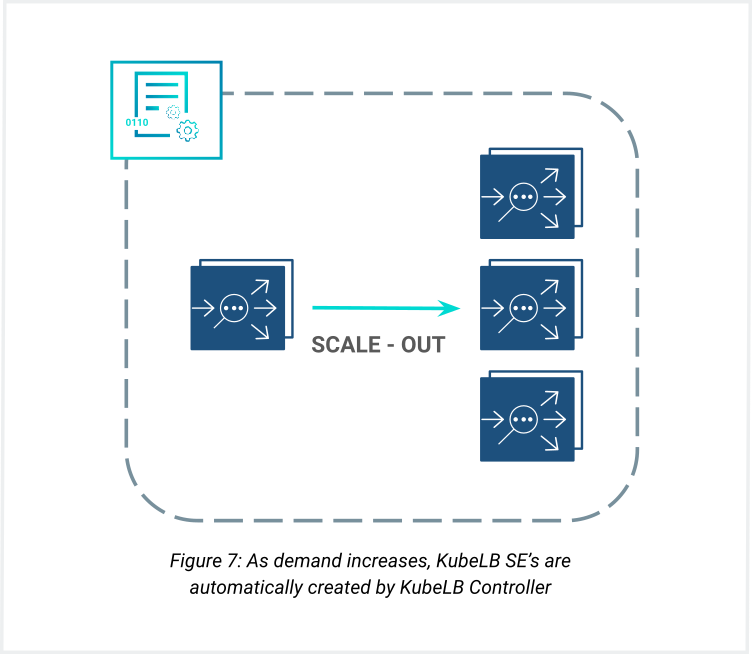

Elastic Data Plane

KubeLB's unique distributed architecture allows Service Engines to scale automatically without human intervention, meeting real-time requirements of microservice-based applications across hundreds of tenants and thousands of applications.

Key Capabilities

Analytics-Driven Application Delivery

KubeLB engines constantly monitor the traffic patterns of each microservice application. When a customizable threshold is met, the newly scaled-out Cilium and Envoy handle the increasing traffic load seamlessly. Furthermore, the Inline Analytics engine can send a trigger based on ambient loads to scale up or down the backend microservices applications.

Elastic Scale

The Elastic data plane of KubeLB can dynamically scale out and scale in to meet the real-time requirements of microservice-based applications across 100s of tenants and 1000s of applications. Cilium and Envoy allow network services for each microservice to be individually scaled up or down. Whether microservices are inside a single physical server, in different servers in a single data center, or even across different data centers, Cilium and Envoy automatically discover and locate themselves in the closest possible proximity to each microservice.

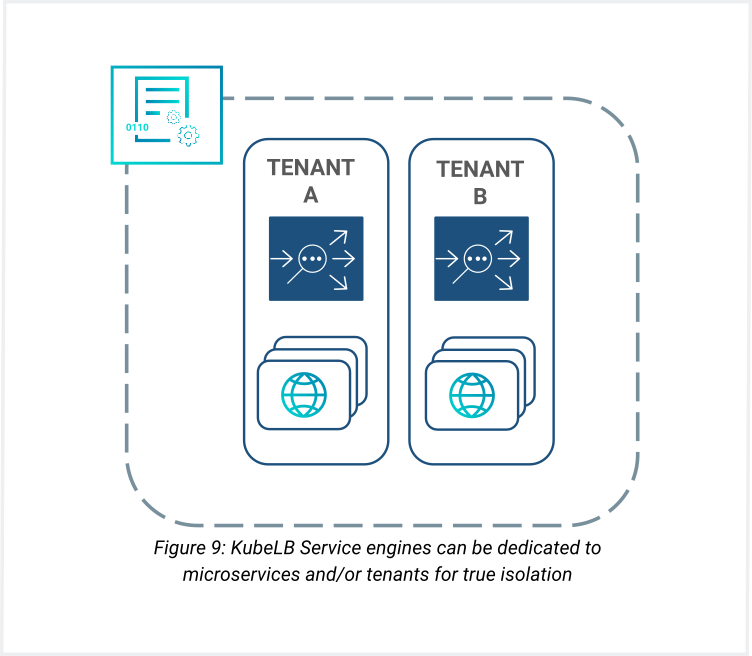

Dataplane Isolation for Tenants and Applications

To avoid sharing appliances between critical applications, tenants and applications are allocated their own Service Engine for data plane isolation. This eliminates the “noisy neighbor” problem wherein a rogue microservice tenant could potentially impact the performance of an adjacent application. KubeLB’s per-tenant, dedicated micro load balancers deliver true multi-tenant application services.

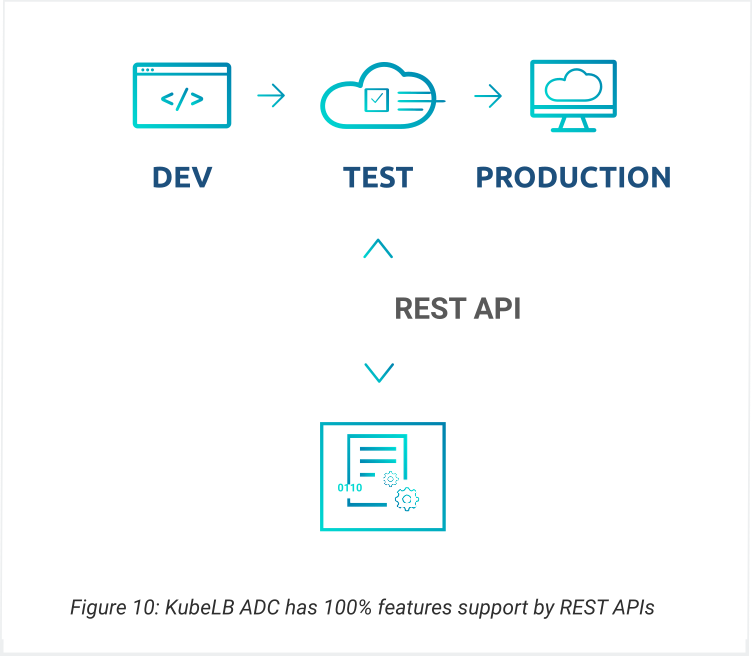

Programmability

All interactions with the KubeLB Controller are through native Kubernetes APIs, which enable native integration with kubectl. DevOps automation tools like Crossplane, Terraform, or Ansible are also natively supported.

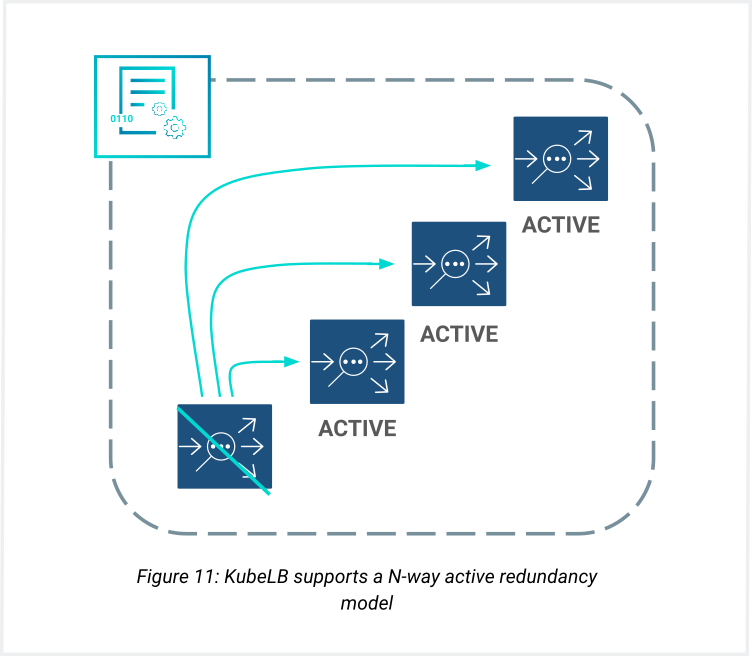

N-Way Active Redundancy

Using redundancy principles from web-scale datacenters, KubeLB provides N-Way Active-Active redundancy along with Active-Active and Active-Standby availability options, ensuring your applications remain available under any failure scenario.

How Load Balancers Need to Evolve

Each group of Cilium and Envoy can be associated with a specific tenant. In a multi-tenant environment, traffic for a particular application is isolated to that tenant's group. KubeLB can manage multiple groups of Cilium and Envoys, with Kubernetes's role-based access control mechanism ensuring users logged into a particular tenant can only view the details of that particular tenant.

True Control/Data Plane Separation

There needs to be a complete and true separation of control and data plane within the ADC, with the ability to distribute data plane resources dynamically across different hardware platforms and public/private clouds — exactly how application microservices can be distributed.

Data Plane Independence for Multi-Tenancy

Achieving data plane independence (isolation) to enable multi-tenancy, especially in cloud environments. This aligns with how microservices can operate and be changed independently of each other without disrupting other microservices — the “no noisy neighbor” impact.

Application Affinity

ADCs must achieve the “application affinity” concept — where resources are aligned for specific functions. This approach offers two significant advantages: the microservice enhances application response time by colocating the ADC resource alongside, and this tight alignment enables ADC resources to achieve automatic microservices lifecycle management without manual intervention, significantly reducing management complexity.

Self-Service SDN Programmability

Fulfilling the self-service programmability and efficiency promise of SDN. Only through true control/data plane separation and the complete centralization of control functions can the ADC achieve the real promise of SDN — enabling one-to-one communications between its controller and the application’s control elements through RESTful APIs.

Summary

The architecture of how applications are developed today has evolved from purpose-built monolithic code to a tightly federated collection of modular and reusable microservices. The move to microservice-app development means existing assumptions around traffic patterns, load balancing scale, and service requirements are no longer valid.

KubeLB is an elastically scalable load balancer with a distributed data plane that can span, serve, and scale with apps across various on-premises and cloud locations. The distributed data plane empowers tenants to obtain application affinity at the microservice level, significantly enhancing overall application performance. The clean separation of planes also enables the creation of a unified, centralized control plane that significantly alleviates operational complexity associated with integrating, operating, and managing each ADC appliance across locations individually.

In summary, KubeLB is a highly flexible, cost-focused, scalable, and efficient load balancer — particularly suited to multi-tenant service providers.

Case Study: Evolving with Application Architecture

In the early stages, a company focused predominantly on low complexity and overhead, leading to rapid software development. The need to rush to market implies developers typically do not have the luxury of designing the application for scalability, high availability, and redundancy. With KubeLB ADC, high availability and scalability can be quickly achieved by running the application on a pair of web servers behind a pair of load balancers — keeping operation costs to a minimum.

Stage 1: Launch

Deploy on a pair of web and database servers behind KubeLB ADC. High availability and scalability achieved immediately with minimal operational cost.

Stage 2: Growth

As demand for the business grows, scale quickly by adding more resources (X-axis scaling) behind KubeLB ADC. Static content management challenges are mitigated using caching engines on the KubeLB ADC.

Stage 3: Scale

As popularity grows, rearchitect the application into smaller services/functions. Database partitions evolve along geographical locations. With KubeLB's future-proof, security-focused design, the ADC used on day 1 can still be used as traffic grows. One instance of KubeLB can serve applications located in a data center and in the cloud simultaneously, with its centralized control and management interface making it all manageable.

About Kubermatic

Kubermatic is a leader in Kubernetes and cloud-native technologies, dedicated to empowering organizations with advanced solutions that simplify and optimize IT management. Our products are designed to meet the needs of modern enterprises, providing the tools and support necessary to drive innovation and achieve business success. For more information about KubeLB and other Kubermatic solutions, please visit our website or contact our sales team.