Here we go, our first release of the year is out! With Kubermatic Kubernetes Platform (KKP) 2.16, we now support the Open Policy Agent for improved policy control and have added dynamic data centers and other expanded GitOps capabilities. On top of that, with Machine Learning being one of the hottest topics of the decade, we are committed to continuously enhance KKP with convenient ML Add-Ons to give data scientists the freedom to always leverage the best cloud for their needs. With KKP 2.16, we are proud to unveil a preview of a Kubeflow integration to provide for truly cloud native Machine Learning.

Read on for these and other improvements our team has been busy with over the past months.

Enterprise-Grade Policy Compliance With Open Policy Agent

Keeping your entire technology stack compliant with your organization’s policies can be an operational nightmare. KKP 2.16 introduces an out-of-the-box integration of the Open Policy Agent (OPA) that enables you to centrally manage and enforce policies in microservices, Kubernetes, CI/CD pipelines, API gateways, and more. OPA is an open source project that provides a high-level declarative language that lets you specify policy as code and APIs to offload policy decision-making to your software.

So far, users can access OPA via the Kubermatic API. We will add a fully-fledged UI integration with one of the upcoming patch releases. Stay tuned!

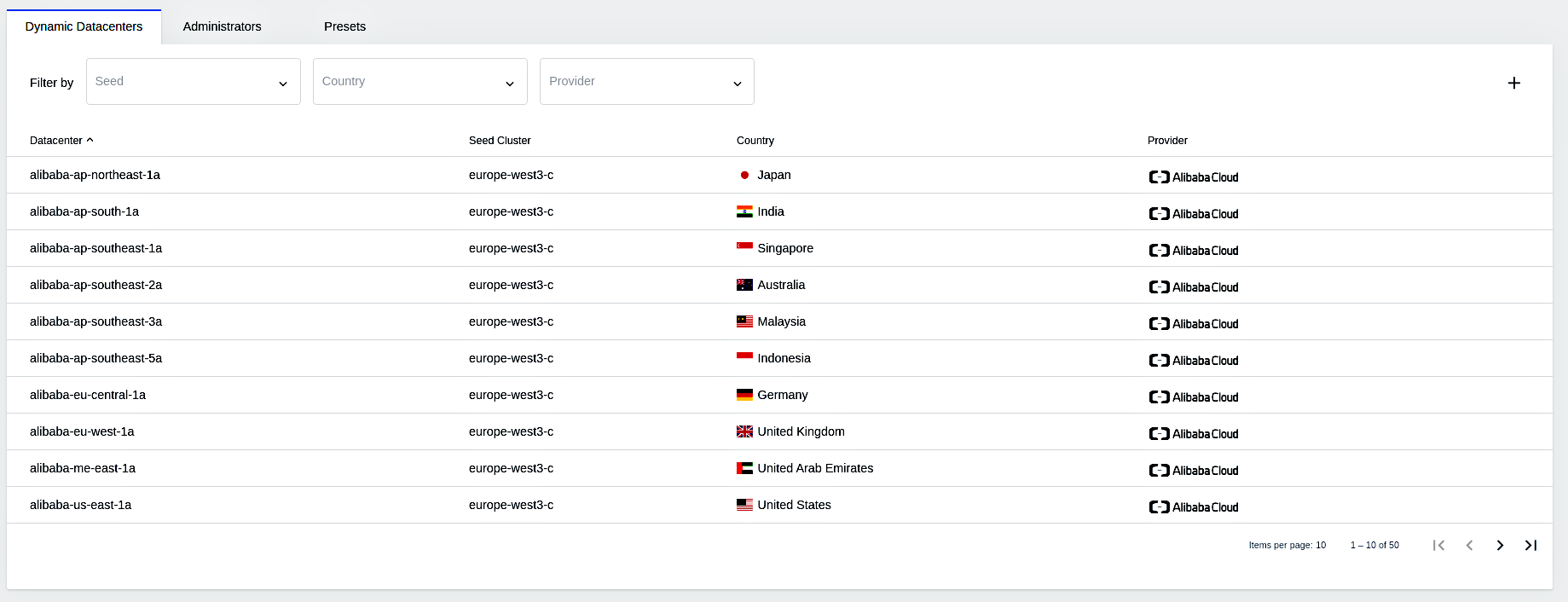

Expanded GitOp Capabilities With Dynamic Data Centers and Other Enhanced Admin Configurations

With KKP 2.16, platform administrators are now able to dynamically configure the data centers they want to use, so they can easily add new ones on the fly.

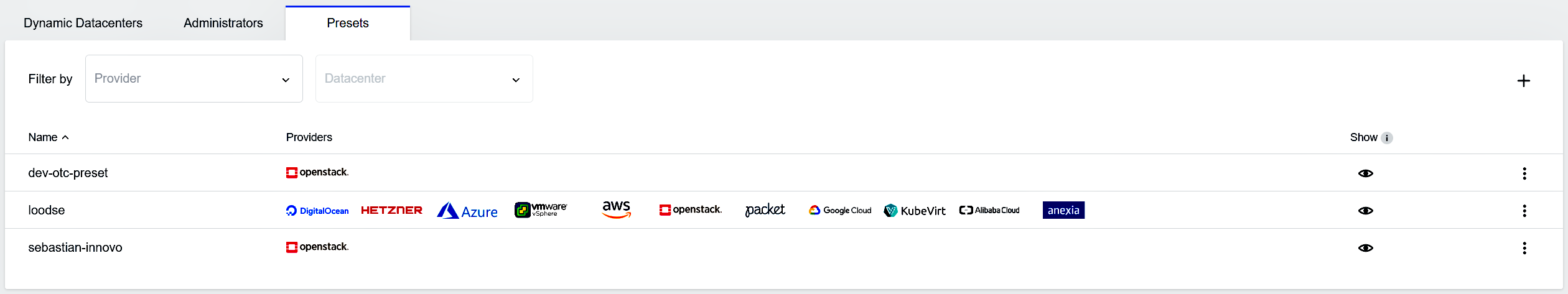

Moreover, the release includes new Preset Management functionalities that enable administrators to configure dev, pre-production, and production environments. Users can then simply choose the environment they need when creating a new Kubernetes cluster, and it will automatically have every configuration attached.

Finally, we introduce the possibility to centrally configure your consumption, providing you with an easy way to manage and control your infrastructure cost.

All configuration can be adjusted in the KKP UI or via Infrastructure-as-Code, helping you to pursue and develop your GitOps approach.

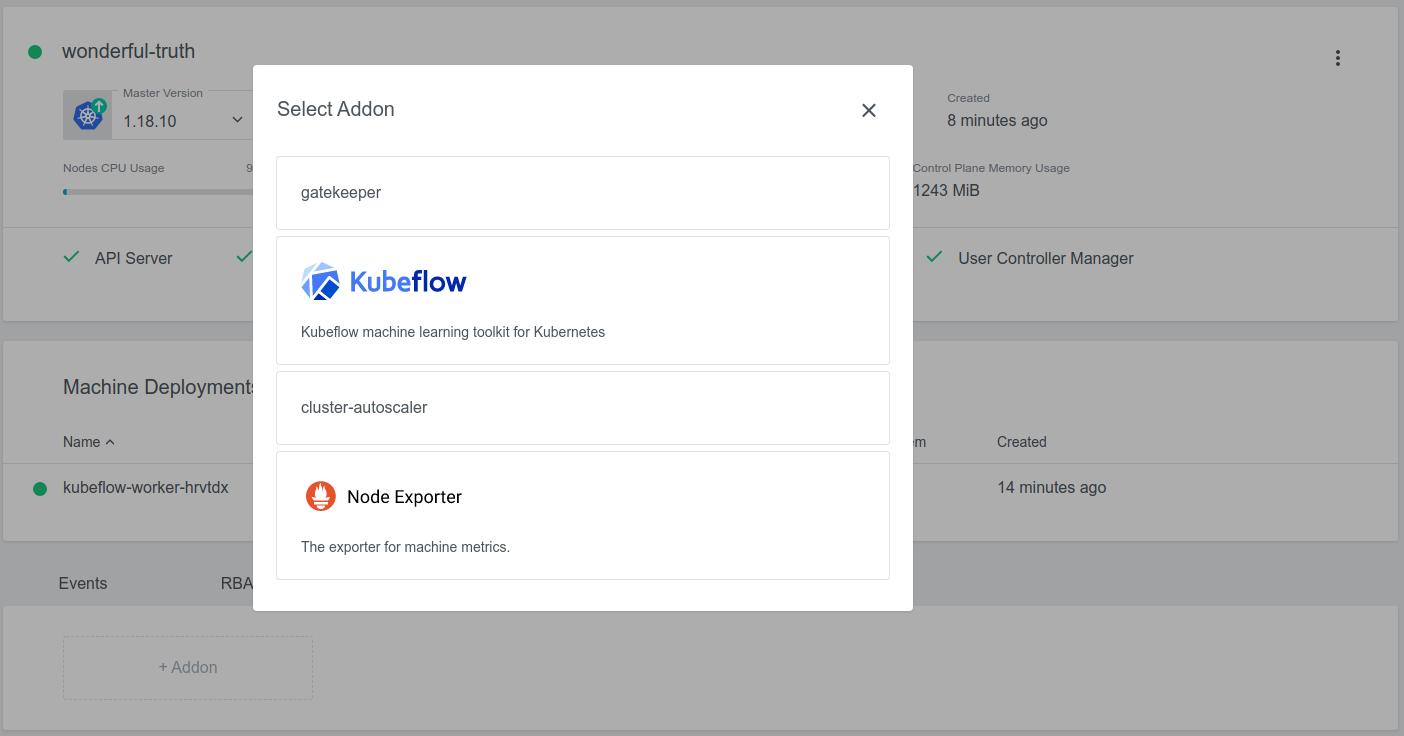

Machine Learning the Cloud Native Way

Machine learning and Kubernetes are great matches and Kubeflow is a great platform for bringing the two worlds together: The open source project makes deployments of ML workflows on Kubernetes simple, portable, and scalable. With our preview, KKP operators can now easily roll out the Kubeflow platform on top of KKP. This integration gives your data scientists the possibility to leverage the power of every cloud available and generate their results most efficiently. (Of course, we are curious to hear your feedback. Please feel free to share your thoughts and ideas with us: product@kubermatic.com)

Benefit From Optimized Infrastructure With ARM Support

ARM-powered data centers and edge scenarios are enjoying increasing popularity for their system optimization and cost reduction potential. Since we are dedicated to providing you with the broadest possible infrastructure, KKP 2.16 introduces ARM support. Thanks to this out-of-the-box integration, KKP users can now effortlessly deploy and manage their ARM-based clusters from the central KKP interface.

Deprecate and Remove CoreOS

CoreOS officially reached end-of-life on May 26, 2020. With the 2.16 release, we will stop supporting any cluster using CoreOS. In this blog post, we explain how you can migrate your clusters to not run into major security liability from defective clusters. (If you liked CoreOS, we are sure you will like Flatcar Container Linux by the awesome Kinfolk folks as well. Of course, KKP comes with Flatcar support).

At Kubermatic, we are dedicated to continuously innovate with the cloud native community. We are looking forward to our next release in a few months and hope you are, too. Stay tuned – there is some cool stuff coming up. In the meantime, feel free to get in touch via Github, Slack, or our Forum.

Learn More

- Take a closer look at our Kubeflow Addon

- Check out the entire Changelog

- Join our live Office Hours on February 17

- More information on CoreOS end-of-life